Political Bias Detection in News Text:

Linear Regression vs. BERT

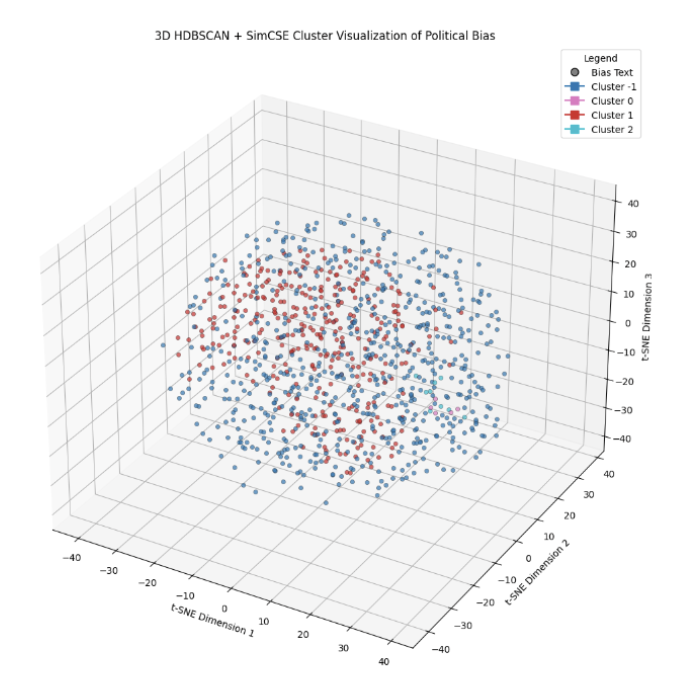

Comparative study of linear regression vs. BERT embeddings for detecting political bias in news articles. Using HDBSCAN clustering on SimCSE-encoded text, we reveal that political lean has meaningful geometric structure in embedding space, structure that bag-of-words and linear models fail to capture. The 3D t-SNE visualization shows partisan separation is real but noisy: left-leaning and right-leaning articles form distinct but overlapping clusters, with hard-to-classify centrist or mixed-bias text occupying the overlap zone.

| Approach | Embedding | Strengths | Limitations |

|---|---|---|---|

| Linear Regression | TF-IDF / BoW | Fast, interpretable coefficients, strong on unigram bias markers | Misses semantic context; "liberal" in conservative article confuses model |

| BERT + HDBSCAN | SimCSE contextual | Captures framing, tone, semantic context; finds structure linear model misses | Slower, less interpretable, cluster boundaries are soft |

Figure 1. 3D t-SNE projection of SimCSE embeddings with HDBSCAN cluster assignments. Blue = Cluster −1 (noise/centrist). Red = Cluster 1 (right-leaning). Overlap in the center reflects genuinely ambiguous political framing.